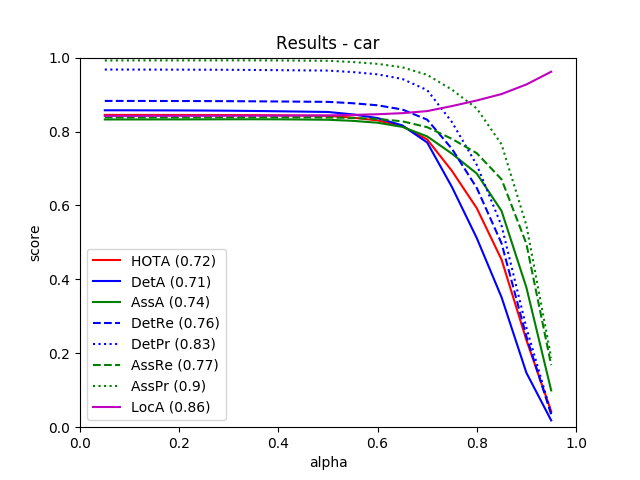

From all 29 test sequences, our benchmark computes the HOTA tracking metrics (HOTA, DetA, AssA, DetRe, DetPr, AssRe, AssPr, LocA) [1] as well as the CLEARMOT, MT/PT/ML, identity switches, and fragmentation [2,3] metrics.

The tables below show all of these metrics.

| Benchmark |

HOTA |

DetA |

AssA |

DetRe |

DetPr |

AssRe |

AssPr |

LocA |

| CAR |

72.21 % |

71.09 % |

74.04 % |

75.98 % |

83.28 % |

76.57 % |

89.97 % |

86.15 % |

| Benchmark |

TP |

FP |

FN |

| CAR |

30274 |

4118 |

1101 |

| Benchmark |

MOTA |

MOTP |

MODA |

IDSW |

sMOTA |

| CAR |

84.53 % |

84.36 % |

84.83 % |

101 |

70.76 % |

| Benchmark |

MT rate |

PT rate |

ML rate |

FRAG |

| CAR |

74.77 % |

12.46 % |

12.77 % |

210 |

| Benchmark |

# Dets |

# Tracks |

| CAR |

31375 |

731 |

This table as LaTeX

|

[1] J. Luiten, A. Os̆ep, P. Dendorfer, P. Torr, A. Geiger, L. Leal-Taixé, B. Leibe:

HOTA: A Higher Order Metric for Evaluating Multi-object Tracking. IJCV 2020.

[2] K. Bernardin, R. Stiefelhagen:

Evaluating Multiple Object Tracking Performance: The CLEAR MOT Metrics. JIVP 2008.

[3] Y. Li, C. Huang, R. Nevatia:

Learning to associate: HybridBoosted multi-target tracker for crowded scene. CVPR 2009.